CERN releases 300TB of Large Hadron Collider data so everyone can study particle physics for free

Hooray for science and freedom of information – CERN has released over 300TB of data from the Large Hadron Collider (LHC) onto the internet for free to enable anyone study particle physics and use the data in their own research.

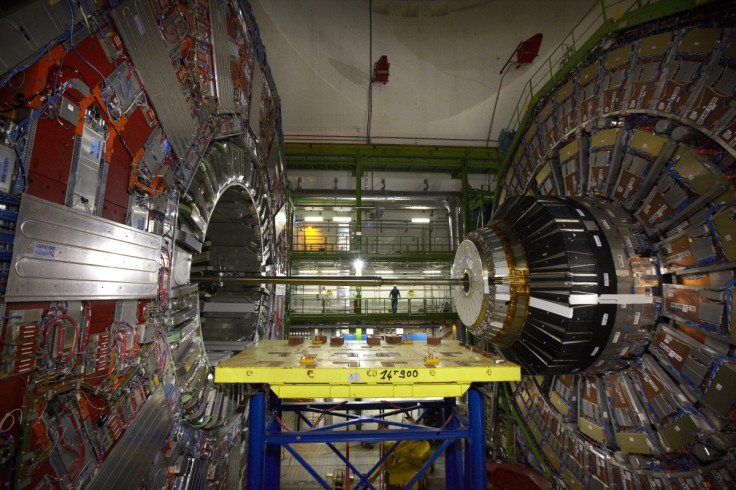

The data is available on the CERN Open Data Portal, which was developed by CERN's IT Department and Scientific Information Service in collaboration with the researchers running the Compact Muon Solenoid (CMS) general-purpose particle physics detector at the LHC.

"Members of the CMS Collaboration put in lots of effort and thousands of person-hours each of service work in order to operate the CMS detector and collect these research data for our analysis," said Kati Lassila-Perini, a CMS physicist who leads these data-preservation efforts.

"However, once we've exhausted our exploration of the data, we see no reason not to make them available publicly. The benefits are numerous, from inspiring high-school students to the training of the particle physicists of tomorrow. And personally, as CMS's data-preservation coordinator, this is a crucial part of ensuring the long-term availability of our research data."

The high quality open data is essentially about half of the data collected at the LHC by the CMS detector in 2011 and includes over 100TB of data from proton collisions at 7 TeV, and the information has been released in several formats – primary datasets (the original data CERN scientists would have analysed) and derived datasets (simplified datasets that can easily be analysed by universities and high schools).

Advanced virtual machine available for free

But most interesting is the fact that CERN has also included tools to make it easy for people to analyse the data, even if they do not have access to expensive, specialised scientific software.

A virtual machine is a type of computer that runs within a piece of software. Similar to a physical computer, it features an operating system and lets users run applications, so using a virtual machine is sort of like using a computer within a computer.

To make the data more easily accessible so that scientists and particle-physicists-in-training can drill down to the science without lots of technical hurdles, CERN is providing a virtual machine image based on its proprietary CernVM system – essentially a virtual machine anyone can download, featuring an environment that comes preloaded with all the software needed to categorise and dissect the LHC data.

Scientists are usually very protective about their data as there is an ongoing arms race in the science world between rival academic institutions all working to make discoveries in a wide range of scientific fields, and one team's success can often affect the work of other teams trying to research the same thing.

However CERN, which operates the largest particle physics laboratory in the world, says that it was prompted to share its data after a group of theoretical physicists at MIT contacted CERN wanting to study the substructure of jets, which is the showers of hadron clusters recorded in the CMS detector. Although CERN obviously had access to data about this particular topic, the research organisation was not studying the topic, and realised that it would not be a bad idea to share their data.

"As scientists, we should take the release of data from publicly funded research very seriously," says Salvatore Rappoccio, a CMS physicist who worked with the MIT theorists. "In addition to showing good stewardship of the funding we have received, it also provides a scientific benefit to our field as a whole. While it is a difficult and daunting task with much left to do, the release of CMS data is a giant step in the right direction."

© Copyright IBTimes 2025. All rights reserved.