15 years of brain research has been invalidated by a software bug, say Swedish scientists

Up to 70% of fMRI analyses produce at least one false positive, challenging the validity of over 40,000 studies.

Scientists from Sweden and the UK have analysed the data produced by the three most common software packages for scanning the brain, and have found that the data is so unreliable – due to bugs in the software – that 15 years' worth of fMRI brain research could be invalidated.

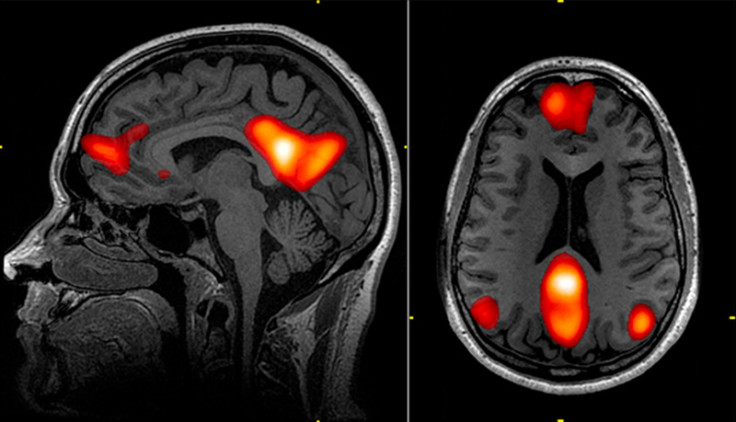

fMRI stands for functional magnetic resonance imaging. It is a functional neuroimaging procedure that makes use of MRI technology to measure brain activity by detecting changes associated with cerebral blood flow – ie, when a specific area of the brain is being used, blood flow to that region will increase too.

fMRI software works by dividing an image scan of the brain into tiny voxels (units of graphic information that define a specific point in three-dimensional space). The computer searches the voxels looking for clusters in order to map the blood flow in various regions of the brain.

Researchers from Linköping University in Sweden and the University of Warwick in the UK, led by neuroscientist Anders Eklund, gathered the fMRI brain activity data of 499 healthy people when their brains were in resting state from databases all over the world, and split the information into 20 groups.

70% chance of finding a false positive

The scientists then measured the data from all the groups against each other, which resulted in 3 million random comparison pairs, and then they tested the comparison pairs against the three most popular software packages that are used for fMRI analysis – namely SPM, FSL and AFNI.

The researchers expected that there would be about a 5% margin in difference between the fMRI software's analysis and the actual data, but they instead discovered that the three software packages were producing data with a 70% chance of finding at least one false positive.

A false positive is when a test result wrongly indicates that something is present when it is not, and the researchers discovered that this was caused by a software bug whereby an algorithm was reducing the size of the clusters being searched for, while also overestimating the clusters' significance.

This means that the fMRI software could have been producing data that showed brain activity in regions of the brain when in fact there was none, meaning that thousands upon thousands of fMRI studies could in fact have questionable results.

But the software bug only affects AFNI

"Functional MRI is 25 years old, yet surprisingly its most common statistical methods have not been validated using real data," the researchers wrote in their paper.

"In theory, we should find 5% false positives (for a significance threshold of 5%), but instead we found that the most common software packages for fMRI analysis (SPM, FSL, AFNI) can result in false positive rates of up to 70%. These results question the validity of some 40,000 fMRI studies and may have a large impact on the interpretation of neuroimaging results."

While this news is devastating for the global fMRI research community, Discover Magazine is sceptical about how serious the issue is, saying that although the bug is serious, it only affects the AFNI software package, but not the other two software packages.

Discover also points out that the researchers' findings only affect fMRI studies that affect activation mapping, but not all studies, and that even though there might be a 70% chance of the software finding at least one false positive in each analysis, this does not necessarily mean that 70% of all positive results are inherently false.

The paper, entitled Cluster failure: Why fMRI inferences for spatial extent have inflated false-positive rates, is published in the journal Proceedings of the National Academy of Sciences (PNAS).

© Copyright IBTimes 2025. All rights reserved.