Bionic Humans or Killer Robots - Where does the future lie?

A lack of regulation raises questions over the future of robotics and who ultimately takes responsibility - man or machine.

While advancements in robotics and artificial intelligence are progressing at an unprecedented pace, some people don't necessarily see this a good thing and even advancements in prosthetics - which traditionally have been seen as entirely positive - raise issues which need to be addressed.

Speaking at Google's Big Tent conference on the outskirts of London this week, a group of experts including the chair of the Stop the Killer Robots campaign Dr. Noel Sharkey, debated the future in a session entitled "Innovation in the next ten years - Bionic Humans or Killer Robots."

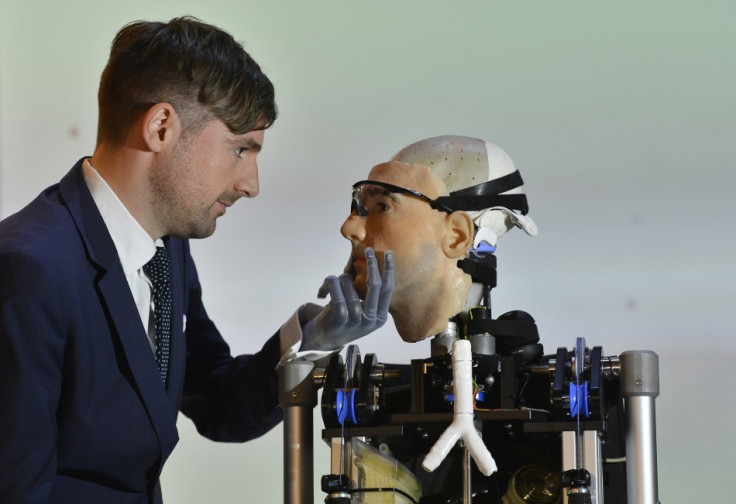

Also sharing the stage was the Bionic Man, an enitrely artificial man constructed entirely from the most sophisticated bionic and prosthetic technology available today.

The Bionic Man was built for a Channel 4 TV show earlier this year when psychologist Bertolt Meyer, who has a bionic hand himself, sought out scientists working in the robotics field to see just how far the technology could go.

Meyer said that prosthetics today are simply not good enough, having a limited range of functions and features. However they are improving fast and will be soon looking at limbs which are connected directly to our brains. Meyer envisions a future where these so-called "super limb" could raise a much more interesting question:

"Will people choose to have healthy limbs replaces by artificial ones which are better?"

As well as raising questions about how far these human enhancements can go, questions about how secure these prosthetics and embedded chips will be is also a concern.

Meyer handed a smartphone to moderator Jon Snow during the presentation and by simply tapping the screen Snow was able to make Meyer's bionic hand move remotely.

While the app can only be installed on phones verified as belonging to wearers of this particular bionic arm, which is called the i-Limb Ultra and built by Scottish company Touch Bionics, it also raises questions about security, as the vulnerability of smartphones to cyber-attacks has become a much-discussed subject within the security industry in recent years.

No regulation

When asked about if the regulations about the use of these products was sufficient, Meyer admitted that he didn't think there was any.

There have been numerous examples of smartphones being hacked so they can be controlled remotely, leading to the possibility of these app-controlled prosthetics and chips becoming potentially lethal.

Sharkey highlighted such an example from a couple of years ago when it was proven that an insulin pump, which diabetics had installed to automate the body's blood-sugar levels, could be hacked and using a Bluetooth device anyone within 100 metres could send signals to the pump directing it to release lethal doses of insulin into the body.

Meyer said the advancements in this type of technology have been unprecedented in recent years, but the question the scientific community has failed to ask is: "Should we be doing this?"

Memory

He spoke about a scientist in California who had been working for 13 years on a chip to be implanted in Alzheimer's sufferers' brains in order to improve memory capacity. Tests on rats had proven successful, but the chip was also shown to improve the memory function of healthy rats, but when Meyer asked if this was the right thing to do, the scientist said he didn't know and hadn't even considered the question.

Sharkey added: "There are great things tech can give you but we must be aware and be cautious of the problems too. It is very difficult to get it right but we must try all the time."

Sharkey's Stop the Killer Robots campaign is seeking to end the ability of automated drones to 'decide' which targets should be fired upon, an ability which has removed the decision-making from human hands. While Sharkey conceded the ultimate responsibility lies with humans, automated drones had "muddied" the waters somewhat as to whether a death is ascribed to man or machine.

Carole Cadwalldr, a columnist with the Observer newspaper who has written extensively on this subject, said this would be a much bigger issue if the use of drones was not limited to countries thousands of miles away: "We would have more of a debate if Pakistanis were sending drones into Surrey and accidentally killing small children."

When the possibility of a Terminator-style apocalypse was muted by Snow, where machines would become self-aware and wipe humans from the face of the earth, Sharkey, who has been working in artificial intelligence for 30 years, dismissed the idea: "I don't think we will lose control of the robots. I don't think we'll get to the point of Terminators."

© Copyright IBTimes 2025. All rights reserved.