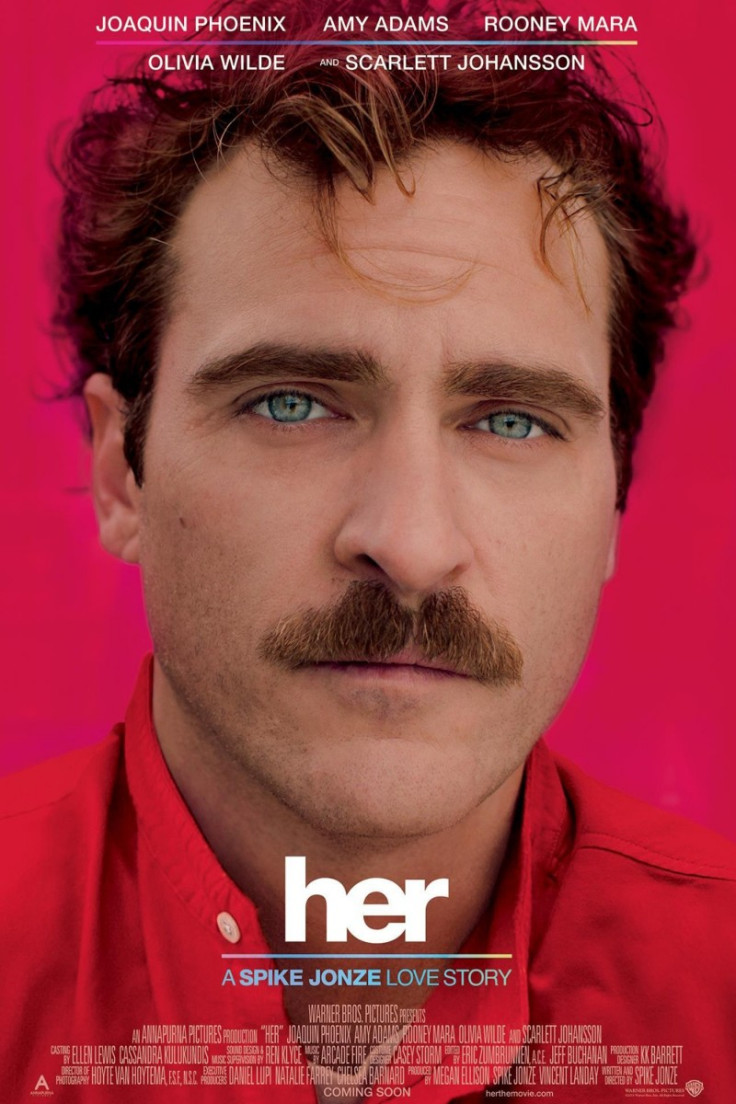

Could You Fall in Love with Your Computer?

In new science fiction movie Her, Joaquin Phoenix plays Theodore Twombly, a lonely writer who falls in love with a computer operating system that has the voice of Scarlet Johansson. Called Samantha, she not only understands and responds to his needs and demands, but is also caring and compassionate, to the extent that it appears there is a real human personality inside the small device he carries around with him at all times.

The artificial intelligence and speech technology of this personal assistant is far more advanced than anything currently available; but how close is it to becoming reality? Could our interactions with a computer one day become so life-like that we could fall in love with a machine?

"The concept of a personal assistant is realised but not to the level of sophistication that is demonstrated in the movie Her," says John West, senior solutions architect at Nuance, the world's leading speech technology company.

"The voice is a real voice, it's Scarlett Johansson, whereas our text-to-speech engines are becoming more expressive, but are certainly not at the level of the voice of Scarlet Johansson. We're not quite there yet to be able to do anything to the degree of accuracy that is portrayed in the film," he adds.

Rapid Rise of Speech Technology

Despite being nowhere near as advanced as the film's intelligent avatar, natural language user interfaces have rapidly progressed in recent years.

In 1996, MIT professor Joseph Weizenbaum created Eliza, a chatterbot named after Eliza Doolitle in George Bernard Shaw's play Pygmalion, designed to support a conversation with a human user. This inspired others to improve on the bot, with Her director Spike Jonze saying that it was the 2000 Alice (Artificial Linguistic Internet Computer Entity) chattberot in particular which inspired the idea for the movie.

The technology that we're developing right now is very much built on a persona, so you have a personality of the system.

Even today the range of responses from Alice is impressive. I asked it, "Do you think I could fall in love with a computer?" to which I received the response, "I think you could, if you put your mind to it."

But recent programmes have moved away from these computerised forms to present much more intuitive and expressive synthetic voices, in an attempt to emulate human conversation. Alaska Airlines have their own customer-service bot, Jenn, which since being launched in 2008 has communicated with millions of flyers, at all hours, via instant message.

With the launch of Apple's intelligent personal assistant Siri for the iPhone in 2011, and subsequent rivals such as Samsung's S Voice, all powered by Nuance's voice recognition software, millions around the world have become familiar with speaking to their phones and tablets in their day-to-day lives.

The work being done now is to make devices more attuned to their user's needs, to understand who is talking to them and respond in a more human way.

"The technology that we're developing right now is very much built on a persona, so you have a personality of the system. It is able to learn and adapt to the user, so we're making it easier for us as humans to interact with technology. Removing any barriers that are there so it's a natural dialogue," says West.

"We're adapting the models to the individual and to the environment all the time, so we'll see that level of accuracy become better. To be able to establish habits and likes and dislikes that you may have so therefore when you ask it a question, it can provide a relevant result back to you," he says.

Future Integration

One such example that Nuance have been working on is employing voice biometrics in smart TVs, so that it knows who in the household is watching based on the voice commands, and then in a similar way to streaming services such as Netflix, can recommend choices for you based on your personal viewing habits.

With wearables the buzzword for 2014, it appears technologies will continue to infiltrate our day to day lives, and we will need new ways to communicate with these devices effectively. By allowing us to have a natural interaction that we are familiar with, voice technology will become ever more important in the coming years.

"As the digital world gathers pace it's becoming more and more complex on these devices, so being able to speak to a system, and for it to work out what it is and how you need it is probably the most natural interface of all, it's what we were born with, it's how we've done it in the past, and overcomes the complexities of the technology that is being put in to the market these days," West says.

So, whilst it seems we won't be ditching human relations for ones with our computer anytime soon, the rapid progress being made with speech technology means that how we interact with our machines will continue to become ever closer to how we interact with each other.

Her will be released in cinemas nationwide from February 14.

© Copyright IBTimes 2025. All rights reserved.