Scientists Can Tell What You're Saying Just By Looking at Crisp Packet Vibrations

An unlikely method of surveillance has emerged in the form of crisp packets, after researchers reconstructed speech by observing tiny vibrations using a "visual microphone".

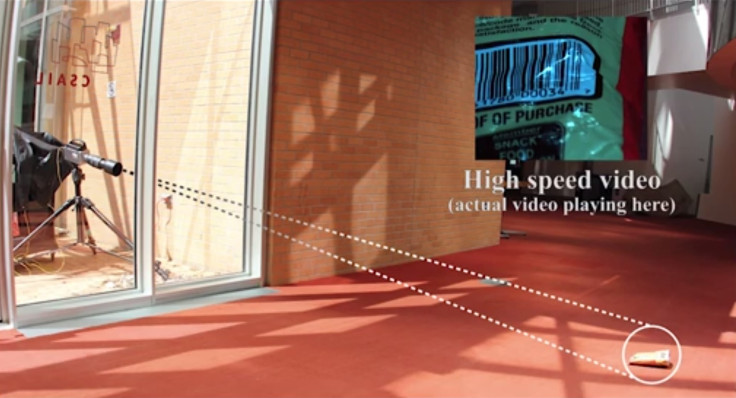

Researchers at the Massachusetts Institute of Technology (MIT), Microsoft and Adobe achieved this by developing an algorithm that can recreate sound based on silent video footage of nearby objects.

As well as packets of crisps, the algorithm can record sound through the vibrations of other items, like alluminium foil, glasses of water and house plants.

"When sound hits an object, it causes the object to vibrate," said Abe Davis, an electrical engineering graduate student at MIT and first author of a paper that details the findings. "The motion of this vibration creates a very subtle visual signal that's usually invisible to the naked eye.

"In our work, we show how using only a video of the object and a suitable processing algorithm, we can extract these minute vibrations and partially recover the sounds that produce them - letting us turn everyday visible objects into visual microphones."

Through this process, Davis and his team were first able to decipher the tune of Mary had a little lamb through vibrations in the leaves of a plant.

To test the sound-processing algorithm, the method was then tested on a bag of crisps from behind sound-proof glass. Remarkably, the visual microphone was able to pick up the words to the same nursery rhyme.

The results of the experiment have already led privacy advocates to warn about the implications of how this technology may be used.

"(Visual microphones) emphasise the need to keep external windows completely covered during sensitive communications," said Lauren Weinstein, a Google consultant and policy analyst.

"We can safely assume that intelligence agencies - at a minimum - have been using this technique for some time."

© Copyright IBTimes 2025. All rights reserved.