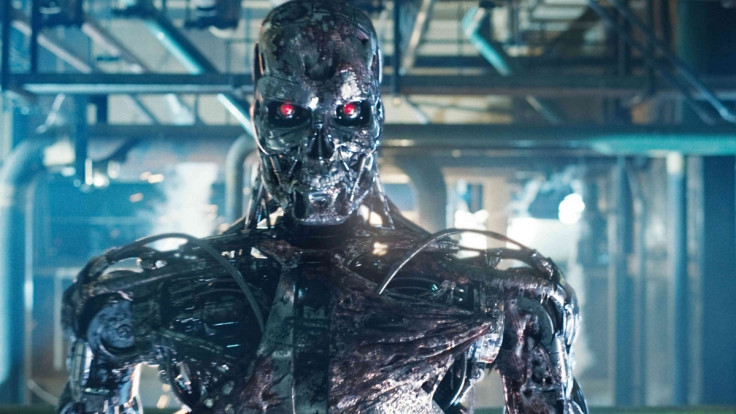

Robot revolution: Scientists launch AI advocacy group to tackle urgent ethical issues

A group of 22 leading robotics scientists, engineers and law scholars have launched an advocacy group in order to advise and lobby governments and enterprises to make sure that robots are being used ethically in light of the robot revolution.

The Foundation for Responsible Robotics was launched on 11 December in London. Founders Dr Aimee van Wynsberghe, Assistant Professor in Ethics of Technology at University of Twente's Department of Philosophy, and Noel Sharkey, robotics professor at the University of Sheffield are keen for anyone interested in robotics, no matter the discipline, from roboticists, technologists, sociologists and anthropologists to lawyers, civil servants and manufacturers to join the organisation in order to examine the increasing impact robots have on our lives.

The robots that exist today are quite useful, even though most can only perform a few simple tasks. However, the fear is that as our daily lives increasingly become more automated, governments and companies will push out robots without fully understanding the technology, which could impact privacy and safety to a large degree.

Humans need to be responsible for the actions of robots

"Robotics has been gradually coming along but there's been a headlong rush in the last two years of them doing a lot more tasks than we thought they could do. No one is looking at privacy or ethical issues, at what's the proper line to take, at what's good or bad. Just because humans are bad at something doesn't mean robots wouldn't be worse," Sharkey told IBTimes UK.

"We're not so interested in artificial intelligence taking over the world, but more how robots will hit the world and making sure we're prepared for that, so looking more at the big dumb robots."

Sharkey and van Wynsberghe's main concern is not that robots will be too clever and take control of our lives, but more that robots are far too stupid to be trusted with important problems, and humans must not cede control.

"We urgently need to promote responsibility for the robots embedded in our society. Robots are only as responsible as the humans who build and use them. We must ensure that the future practice of robotics is for the benefit of mankind rather than for short term gains," said van Wynsberghe.

"To accomplish this, the policies governing robotics must maintain ethical and societal standards of fairness and justice."

Unethical childcare and elderly care robots on the rise

The scientists say that there are already several worrying examples where robots are being pushed out without any thought for ethics and legality, such as in Japan and Europe, where elderly care robots monitor old people to make sure they don't have accidents or fall ill, are being pushed out at an alarming rate.

Some of these robots are armed with cameras in order to monitor the elderly in their homes so that they can live an independent life and give their families peace of mind, but the robots pose privacy risks as they could potentially surprise the elderly in a compromising position, such as in the bathroom.

There are also grave concerns about the companies who are developing childcare robots, as many of the robots look deceptively real. Research has shown that children can bond with these robots more than with humans and development attachment disorders, to say nothing of the privacy concerns relating to smart toys like Hello Barbie.

And then there are the drones – in the UK police forces in Devon, Cornwall and Dorset are now trialling the use of drones to help find missing people and photograph crime scenes, while North Dakota has become the first state in the US where it is legal for police to arm drones with tasers, tear gas and rubber bullets.

Efforts to ban killer robots are going well

Together with a group of concerned robotics scientists, Sharkey has already been successful in lobbying the United Nations on the subject of autonomous weapons, also known as killer robots.

Three years after their mission started, there are now 54 NGOs lobbying against killer robots, 60 nations have spoken up in protest and the issue continues to be debated at the annual UN Convention on Certain Conventional Weapons (CCW) in November, which is the first step towards getting autonomous weapons banned for good.

"Our focus is very much on the humans behind the robots, holding the humans responsible, whether it's manufacturers or government. We want to get a clear chain of accountability and responsibility, and that needs to be part of policy," stressed Sharkey.

"There's no notion of the societal impact. We believe that policy makers don't really understand the technology at all, and if policy can't keep up with it, neither can law."

© Copyright IBTimes 2025. All rights reserved.