Microsoft CaptionBot: AI image guessing app really isn't sure who Barack Obama is

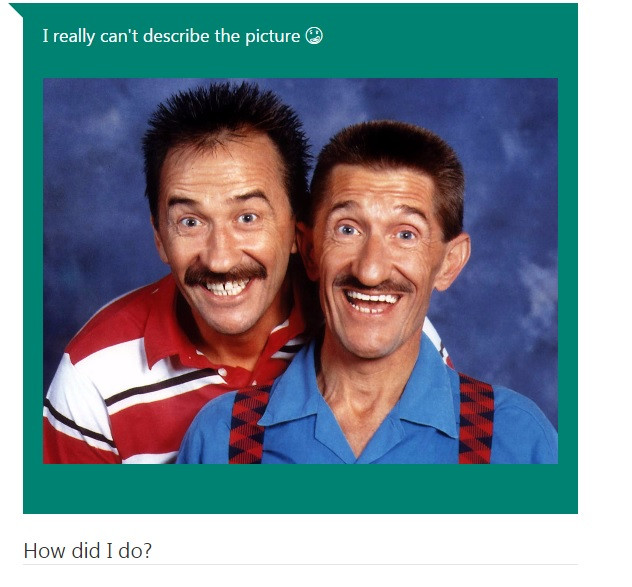

With the disaster of Tay, the Hitler-loving Twitter robot, brushed under the carpet, Microsoft is having another stab at artificial intelligence with CaptionBot, a website which can describe photographs.

Users are asked to upload any photo to the site, then Microsoft's AI system attempts to describe what is in the image. The system can recognise celebrities and understands the basics of image composition but, as some of the following examples show, it isn't yet perfect.

After a morning of experimenting with CaptionBot – and trawling through better, much funnier examples on Twitter – we found the system has no idea who Barack Obama is, often sees objects which are not there, but can correctly identify a dog disguised as a cat.

First up, here is an example of how impressive CaptionBot can be.

Even a dog wearing a convincing cat mask failed to trick CaptionBot

Well played #captionbot, well played. pic.twitter.com/ZCsCABSnQr

— Saeed Goraya (@sgoraya) April 13, 2016

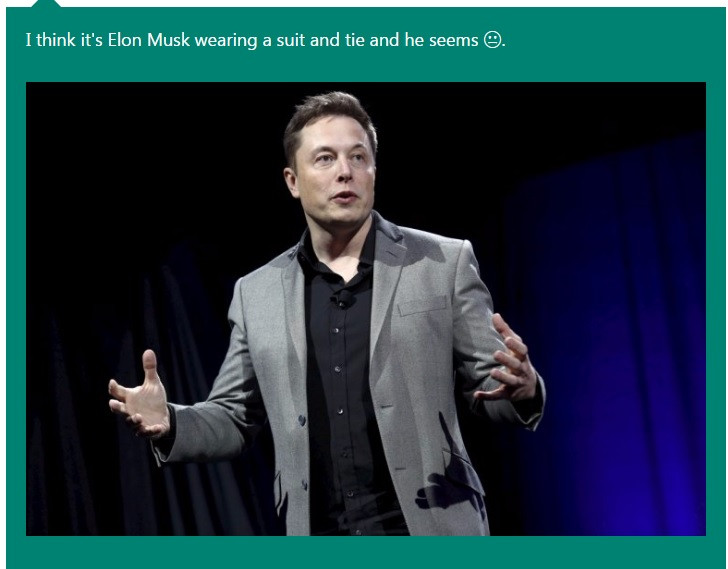

CaptionBot can also identify some celebrities, such as Elon Musk. The system also noticed he is wearing a suit, but failed to spot the lack of a tie.

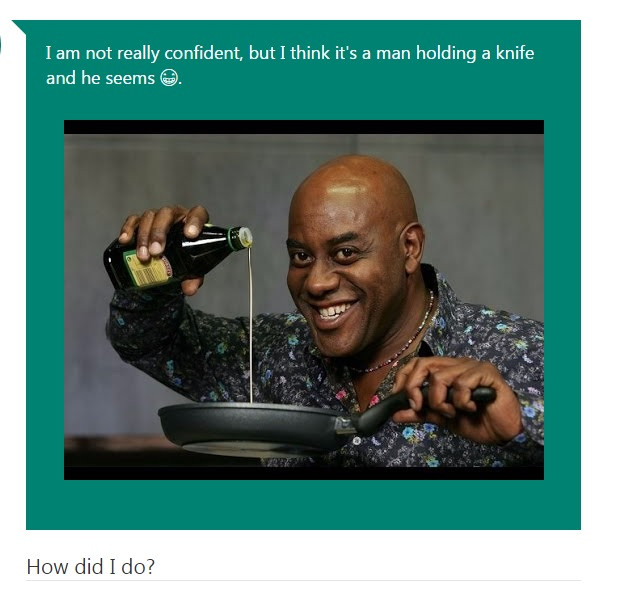

Another trick is CaptionBot's ability to read facial expressions and offer up a suitable emoji. This one is, clearly, spot on. Mistaking a frying pan for a knife is forgivable, I guess.

But CaptionBot isn't perfect. While identifying Angelina Jolie, it failed to understand that she is a woman and described her as "he".

Not quite...@Microsoft #CaptionBot pic.twitter.com/6l4sFSxvFs

— Edward Usher (@EdwardUsher) April 14, 2016

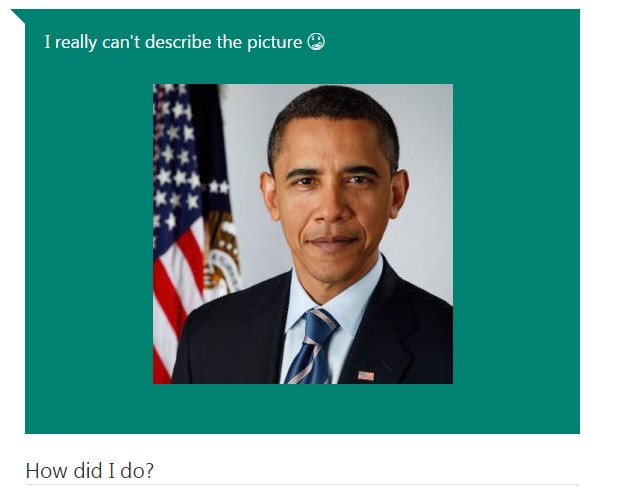

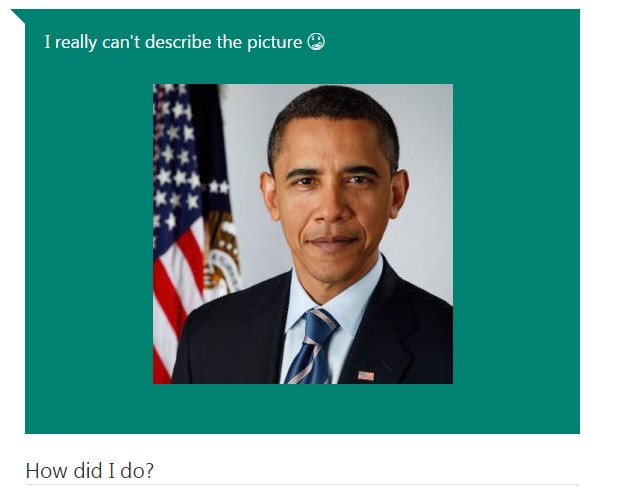

You know when you recognise someone, but can't quite put your finger on who it is? Caption Bot doesn't do that, it just fails to even describe what a photo of Barack Obama is, never mind who he might be.

And it was just downhill from there, really...

Swing and a miss #captionbot pic.twitter.com/Sz9Jj72s98

— Landarim Chillbeard (@IncineratorBlue) April 14, 2016

omg captionbot pic.twitter.com/3ePdM2y6LV

— Carly Page (@CarlyPage_) April 14, 2016

#captionbot #fail pic.twitter.com/IMq661bqRQ

— Andy Dangerfield (@andydangerfield) April 14, 2016

Close enough @Microsoft #CaptionBot pic.twitter.com/OGusOv0Ht8

— Moritz Grellner (@momosby1992) April 13, 2016

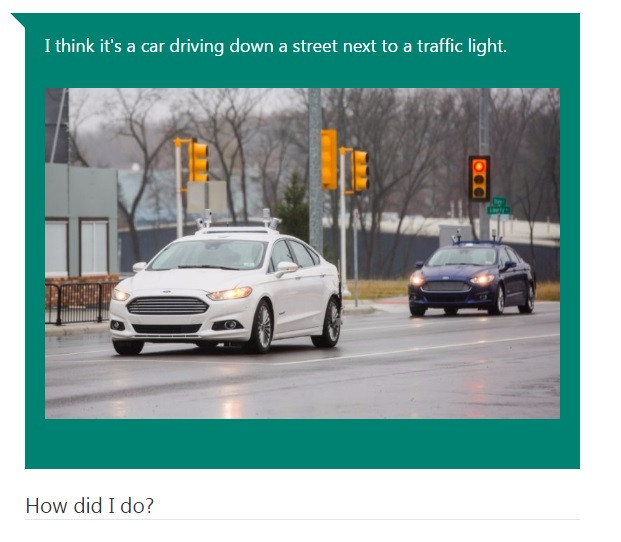

But what about objects rather than people? Here, CaptionBot performs much better

Clearly, these are early days for artificial intelligence and already experiments like CaptionBot have proved how smart it can be in the right circumstances. Naturally, people like us and most of Twitter will poke fun at AI and expose its flaws – just like how Tay was talked into becoming a massive racist – but this kind of technology shows incredible promise. Just look at how this very system is being used by Microsoft to help explain the surroundings to blind and partially sighted people.

© Copyright IBTimes 2025. All rights reserved.