Android apps will soon have a better understanding of your behaviour via Google awareness APIs

The APIs will allow app developers to explore various contexts like location, weather and user activity.

Google announced the Google Awareness API (application programming interface) at the Google I/O 2016 and has now gone ahead and launched it for developers to create apps that understand the behaviour of the user. Apps when developed with the help of these APIs can soon assist you in ways that Google's very own Now system works.

Google releases APIs which are essentially a set of functions that allow the creation of applications while accessing the features or data of Android. The APIs in particular will allow app developers to explore various contexts like general location, the local weather, user activity (such as walking or running), and the location of any nearby Bluetooth 'beacons', to help understand the user.

Google suggests two ways that these APIs can be used:

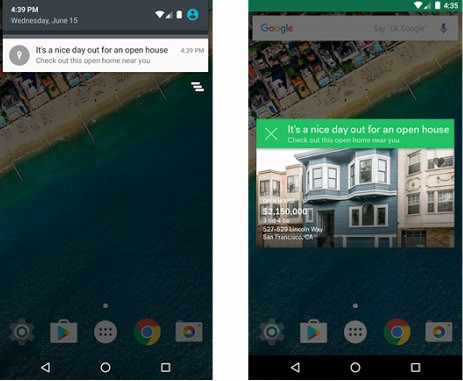

- First is the Snapshot API, where the app requests information about your current context. This may include location which will help it determine things like weather, nearby restaurants, ATMs and gas stations.

- The other is Google's Fence API, where apps can cause a typical type of behaviour when a set of conditions are met. For instance, a music app that will suggest appropriate music when it detects a user is exercising or planning to go for a walk.

Many apps on the Google Play Store already have such traits especially the Snapshot API which uses location to determine a user's next move. Google is hoping to enhance this experience by allowing app developers to use both of these together and create features in their apps that will allow for a much better understanding of the user.

© Copyright IBTimes 2025. All rights reserved.