Artificial intelligence: Do not fear the robot revolution

Humans, broadcast earlier this year, became UK broadcaster Channel 4's most popular drama series of all time. It followed a group of highly intelligent 'Synths' (androids) who live with, and form relationships with their human family owners.

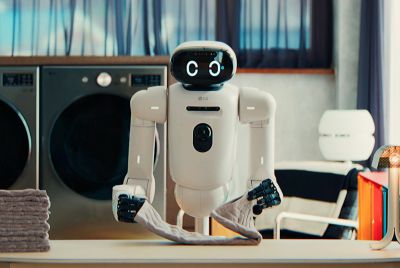

Set in the not-too-distant-future, initially the incredibly lifelike Synths are helpful servants, endlessly carrying out household chores, just as their owners had employed them for. However, unbeknown to their human host families, something was awry with their Synths' artificial intelligence (AI) programming: they were becoming evermore sentient and self-aware.

At which point things ended up getting messy, involving everything from robot sex to the moment the Synths collectively questioned their programmed enslavement and yearned for their own freedom. Series two will reveal how far they get with that one.

Some of it was far-fetched, but compared to earlier sci-fi comic-book style robot fantasies (including Arnie's monosyllabic Terminator), Humans' portrayal of what might happen when machines end up outsmarting us was enthralling viewing.

Not least because it reposed in a contemporary way the same Frankenstein nightmare of what happens if we unleash our creations onto the world that we subsequently can no longer control.

Humans is, in fact, just the latest of an emergent culture that is taking these kinds of AI moral and ethical dilemmas very seriously. Some believe — like the growing movement of so-called transhumanists (who among other things advocate using technology to enhance and better ourselves) — that the point of 'singularity' (when artificial intelligence matches and exceeds human intelligence) is now a realistic possibility. Some transhumanists have even formed their own political party to promote such debates in the mainstream.

Of course, much of the interest and speculation around artificial intelligence is as a result of many significant scientific and technological breakthroughs. Today, maybe unbeknown to many people, there are many aspects of how we live that are aided and abetted by clever computers and robots. It's not just that computers can now outwit Grand Master chess champions. Robots, and clever AI computing can build and drive cars (autonomously), drive trains and fly and land planes too. Elsewhere, powerful AI trading algorithms power much of our financial banking system.

The proliferation of AI in our lives has meant that we are at an ever greater risk of state-sponsored surveillance. Increasingly sophisticated recognition software can look up our car number plates, and our faces when walking down the street, all caught on CCTV. All of this is done at a scale and speed that no human could ever begin to match. So, in many ways, the AI 'genie' is already out of the bottle.

But while AI computing is getting very powerful, in many other ways, the science behind it is still a very long way off from matching anything close to human intelligence. Even if you (wrongly) believe that artificial intelligence is about replicating the neural networks of the brain — as some AI proponents do — then we are nowhere near achieving that goal.

Take the human brain. It has around 100 billion neurons, with one trillion connections between them. So far, the best attempts to artificially map a living brain is by the OpenWorm project. The team behind it have managed to map the roundworm Caenorhabditis elegan's 302 neurons into a computer simulation that powers the movement of a simple Lego robot.

While a remarkable feat in itself, this is still light years away from the complexity of the human brain — let alone in understanding how to synthesise human consciousness and intelligence. Why is it then that so many leading scientists, commentators and the public at large, seem to be so perplexed by existential questions of whether we should prepare ourselves for an era when clever machines will rule over us?

Numerous university departments and research centres around the world, including the Centre for the Study of Existential Risk at Cambridge University and Future of Life Institute at MIT, are highly concerned with the potential risks brought about by AI.

Even notable technologists and scientists such as serial entrepreneur Elon Musk, philanthropist Bill Gates, and the physicist Stephen Hawking, all openly talk about the very real threat from AI machines. Hawking went so far as to warn that AI could "spell the end of the human race". Indeed, some think the AI risk is so bad that it has replaced climate change as the number one bogeyman facing humanity.

But in some ways, what is being highlighted is a general anxiety that countless other societies have felt, each time a new imminent technology has emerged.

However, compared to past eras that underwent huge upheavals — including the rapid period of mechanisation felt during the industrial revolution, or more recently, the dramatic impact the Internet has brought about in how we communicate — the promised 'intelligence explosion' still feels a very long way off.

Perhaps being too fearful at such an early stage itself carries a huge risk? Since we could end up curtailing what could be a very exciting yet very nascent technology. While having our own Synths, carrying out mundane chores, does seem a long way off, today there is a huge role for many more applications of AI and smart robots — not least in helping us go into many inhospitable or dangerous places — that includes exploring the cosmos, starting with Mars.

Just when we could do with super-human help, we risk throwing away the opportunity. Forget AI: the real threat today is our imagination when it comes to mastering the output of our own intelligence.

Martyn Perks is a digital business consultant and writer. He is producing the debate 'Man vs machines: who controls the robots?' at the Battle of Ideas festival on 18 October. IBTimes UK is a media partner for the festival.

© Copyright IBTimes 2025. All rights reserved.