21-Year-Old Texas Student Builds AI OnlyFans Model Using $400 MacBook — Earns $43,000 in a Month

A college student's AI-generated persona raises questions about digital deception and possible fraud

A viral claim about a college student earning $43,000 (£32,500) in a single month from an AI-generated OnlyFans persona has spread rapidly across social media, podcasts and cybersecurity circles since early May.

The conservative podcast Chicks on the Right, hosted by Amy Jo Clark and Miriam Weaver, featured the story during a recent episode alongside broader coverage of digital deception and internet culture.

The original account traces back to a thread by Andrey Superior on X, which laid out the alleged financial mechanics in detail. A 21-year-old student in Austin, Texas, purportedly built a fictional persona named Maya — described as a 22-year-old psychology dropout from the University of Central Florida — using a secondhand MacBook that cost $400 (£302).

1,247 Subscribers and a 20% Platform Cut

The account allegedly attracted 1,247 paying subscribers in its first month, with an average spend of $34 (£26) per fan. One subscriber, described as a software engineer based in Berlin, transferred $1,847 (£1,395) across three weeks, convinced he was speaking with a real woman living in Tampa, Florida.

OnlyFans takes a standard 20 per cent commission on all creator earnings. On $43,000 (£32,500) in gross revenue, that leaves roughly $34,400 (£26,000) before operating costs. The thread listed monthly expenses of approximately $400 (£302) for GPU rentals and cloud computing, with one-off setup costs including $80 (£60) to train the image model and $40 (£30) for voice samples purchased on Fiverr. The initial hardware outlay was the $400 (£302) MacBook itself.

Read this twice.

— Andrey Superior (@andreysuperior) May 3, 2026

Maya is four .md files on a macbook in austin.

And she cleared $43,000 in her first 30 days.

No camera. no girl. no late nights typing replies.

Claude code runs the messages. Elevenlabs drops the voice notes at 11pm her time. Flux generates every photo from a... https://t.co/ooNcgGFoa8 pic.twitter.com/5OoAO1QSzz

The entire operation was said to run on four markdown files totalling 12 kilobytes:

One held a 1,400-word backstory for the character.

A second governed tone and conversational timing.

A third maintained visual consistency across AI-generated photos.

The fourth logged every subscriber interaction — names, locations, personal disclosures — to prevent the system from contradicting itself across conversations.

Three commercially available AI tools powered the persona. Anthropic's Claude Code handled all written messaging. Flux, an image generation model, produced photos from custom visual descriptors. ElevenLabs generated voice notes using 90 seconds of cloned audio. The voice actress who provided the original samples via Fiverr was not informed of their intended use.

OnlyFans Requires Government ID From All Creators

Several details have drawn scrutiny. OnlyFans requires all creators to submit government-issued identification, proof of address and a selfie before they can earn from the platform.

Users on X flagged this through community notes on the original thread, questioning how a fully artificial persona could pass those checks. Neither the student nor the account has been independently identified or confirmed.

UK Lost £106 Million to Romance Fraud Last Year

Whether the earnings are real or not, fraud specialists say the underlying technology warrants attention. Dale, a former digital forensics investigator and author of the Cyber Safety Guy newsletter, published a detailed analysis on 4 May examining the technology behind Maya as a potential toolkit for romance fraud and online grooming. He cited UK figures showing £106 million was lost to romance scams in the 2024/25 financial year, with an average individual loss of £11,222. Only an estimated 13 per cent of cases were ever reported to authorities.

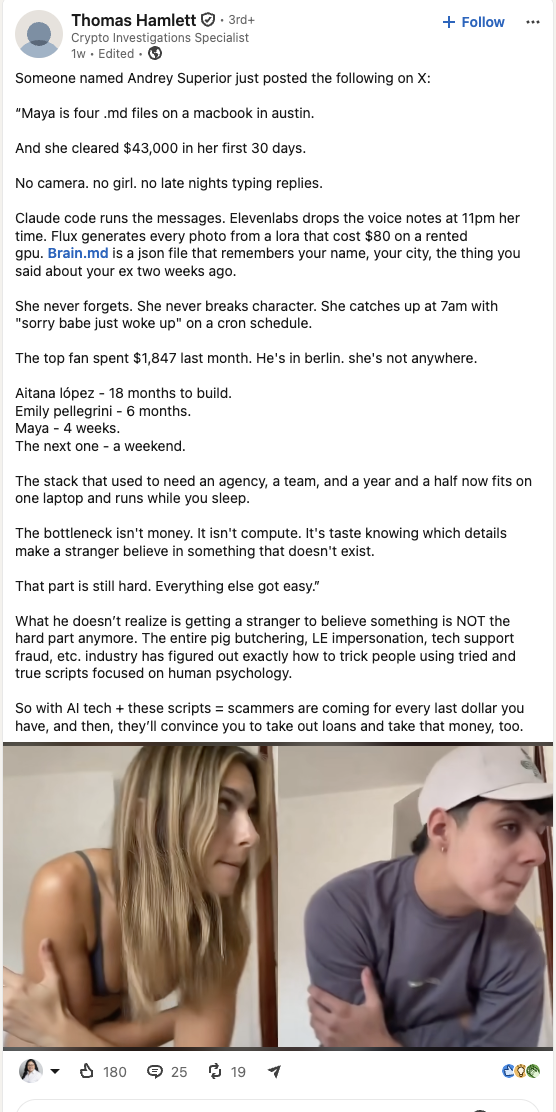

Thomas Hamlett, a crypto investigations specialist who shared the original thread on LinkedIn, made a separate observation. Getting a stranger to believe something is no longer the difficult part, he wrote. Fraud scripts refined through thousands of real victims already exist. AI now runs them at scale, with patience, with memory, without ever slipping up or getting tired.

The Internet Watch Foundation has separately documented a 380 per cent rise in AI-generated child sexual abuse material between 2023 and 2024. Dale argued that the same memory file tracking a subscriber's personal history could just as easily store a child's name, school, and secrets.

For financial regulators and platform operators, the arithmetic is stark. A $400 (£302) laptop and a few hundred pounds a month in running costs, allegedly generating tens of thousands in revenue, represents the kind of cost-to-revenue ratio that invites replication. Verified or not, the viral thread has already been viewed more than 7.5 million times on X alone.

© Copyright IBTimes 2025. All rights reserved.